Chat

Build a powerful AI assistant with multiple LLMs, generative UI, web browsing, and image analysis.

For the complete documentation index, see llms.txt. Prefer markdown by appending.mdto documentation URLs or sendingAccept: text/markdown.

The Chat demo application showcases an advanced AI assistant capable of engaging in complex conversations, browsing the web, working with file attachments, and sharing selected conversations through public links. It integrates multiple large language models (LLMs), supports reasoning-enabled models, and streams responses in real time.

You also get light chat management features like pinning and renaming so conversations stay easy to find.

Features

The chat app offers a variety of capabilities for an enhanced conversational experience:

Multi-model integration

Deep reasoning

Experience an AI that truly understands complex questions and delivers thoughtful, nuanced responses based on comprehensive reasoning.

Live web information

Access up-to-the-minute information from the web through the integrated search capability powered by the shared web search provider layer.

Shareable chats

Share a conversation up to a chosen point, then copy or open the public link from the built-in share sheet.

Instant response delivery

Enjoy natural, fluid conversations with responses that stream in real-time, eliminating waiting periods.

Chat management

Pin and rename chats for quick organization without adding unnecessary complexity.

Setup

To implement your advanced AI assistant, you'll need several services configured. If you haven't set these up yet, start with:

Database

Configure a PostgreSQL database to store conversation history and metadata.

Storage

Set up S3-compatible storage for handling file attachments.

AI models

Different models offer varying capabilities for tool calling, reasoning, and file processing. Consider these differences when selecting the optimal model for your specific use case.

The Chat app uses the AI SDK to support multiple language and vision-capable models. You can switch models based on your needs. Explore the most relevant providers here:

OpenAI

Implement GPT and o-series models for powerful text generation.

Anthropic

Integrate Claude models renowned for nuanced reasoning.

Google AI

Incorporate Gemini models for versatile AI capabilities.

xAI Grok

Leverage xAI's innovative Grok models for advanced interactions.

DeepSeek

Use DeepSeek models for chat and reasoning-focused workflows.

For detailed configuration of specific providers and other supported models, refer to the AI SDK documentation.

Web browsing

The chat app includes a dedicated web-search tool with provider-specific strategy adapters. The current codebase includes integrations for Tavily, Brave Search, Exa, and Firecrawl.

This provider layer keeps the tool contract stable while letting you switch or extend the underlying search backend. It also centralizes result normalization so the chat flow does not depend on each provider's raw response format.

Web search

See the implemented search providers, code layout, and required environment variables.

Tavily quick start

Tavily remains a strong default option because it is optimized for LLM and agent workflows and returns structured, AI-friendly search results with minimal setup.

Free tier available

Tavily offers a generous free tier with 1,000 API credits per month without requiring credit card information. A basic search consumes 1 credit, while an advanced search uses 2 credits. Paid plans are available for higher volume usage.

To enable web browsing, follow these steps:

Get Tavily API Key

Sign up or log in at the Tavily Platform to obtain your API key from the dashboard.

Add API Key to Environment

Add your API key to your project's .env file (e.g., in apps/web):

TAVILY_API_KEY=tvly-your-api-keyWith the API key properly configured, the chat app can use Tavily for searches when contextually appropriate.

Data persistence

User interactions and chat history are persisted to ensure a continuous experience across sessions.

Database

Learn more about database service in TurboStarter AI.

Conversation data is organized within a dedicated PostgreSQL schema named chat

to maintain clear separation from other application data.

chat: stores records for each conversation session, including metadata likeuserId,name, and timestamps.message: stores individual messages linked to a parent chat.part: stores structured message parts, including text parts and file parts.usage: stores model/provider usage metadata for assistant responses.

Storage

Learn more about cloud storage service in TurboStarter AI.

Files shared within conversations are uploaded to cloud storage (S3-compatible), with attachment metadata stored in message parts and signed URLs generated when the files need to be read back.

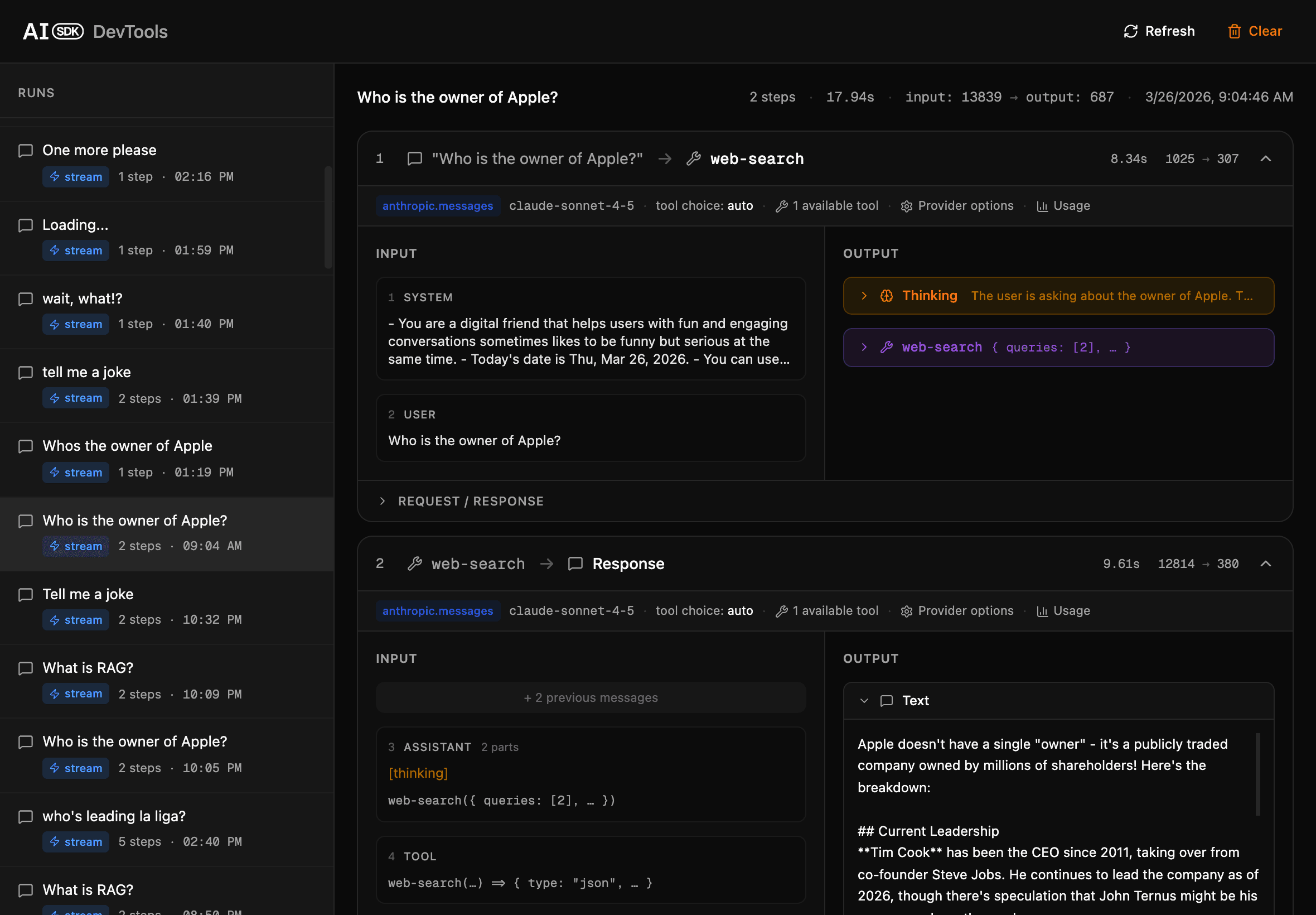

Devtools

TurboStarter AI includes a built-in devtools tool designed to help you inspect, debug, and understand all aspects of the AI chat experience. When you run the development server, it becomes available at http://localhost:3001.

The devtools provide a detailed view into chat request/response flows, message payloads, model invocations, and step-by-step assistant function calls as they occur.

You can monitor live chat events, observe intermediate reasoning traces, and troubleshoot issues - making it much easier to build, test, and optimize AI-powered conversations with full transparency.

Structure

The Chat functionality is distributed across shared packages and platform-specific modules for web and mobile, ensuring strong code reuse and a consistent product experience.

Core

The shared chat logic lives in @workspace/ai-chat, implemented in packages/ai/chat/src. It includes:

- Zod schemas for chat payloads and options

- Model definitions and provider strategy wiring

- Chat persistence helpers for messages, parts, attachments, and usage

- provider-backed web search tooling under

tools/web-search - Streamed AI responses built on the AI SDK

API

Built with Hono, the packages/api package wires the chat app through packages/api/src/modules/ai/chat.ts.

That module validates incoming payloads, applies shared middleware like authentication and credit deduction, and then forwards the request into @workspace/ai-chat, where the chat stream, persistence, attachment handling, and model/tool execution actually happen.

Web

The Next.js web application in apps/web implements the user-facing chat experience:

src/app/[locale]/(apps)/chat/**: route entry points for the chat appsrc/modules/chat/**: the actual feature modules for composer, history, conversation UI, web search rendering, and attachment handling

Mobile

The Expo/React Native mobile application in apps/mobile delivers a native chat experience:

src/app/(apps)/chat/**: route entry points for the mobile chat appsrc/modules/chat/**: mobile-native chat modules for composer, history, and conversation UI- API interaction: uses the same shared Hono client as the web app for consistent backend communication

This modular structure promotes separation of concerns and facilitates independent development and scaling of different parts of the application.

How is this guide?

Last updated on